@Amelia and I have cooked up a possible demo. There is a story to go with it. The post is long, but mostly pictures. You can reproduce the steps yourself by using the GraphRyder dashboard.

The story: I am a senior-level decision maker, maybe the minister of health or something. I am examining the output of the collective intelligence process in interactive form. I need to use interaction to make sense of it: reading the whole corpus is out of the question. Even reading the report is quite unlikely.

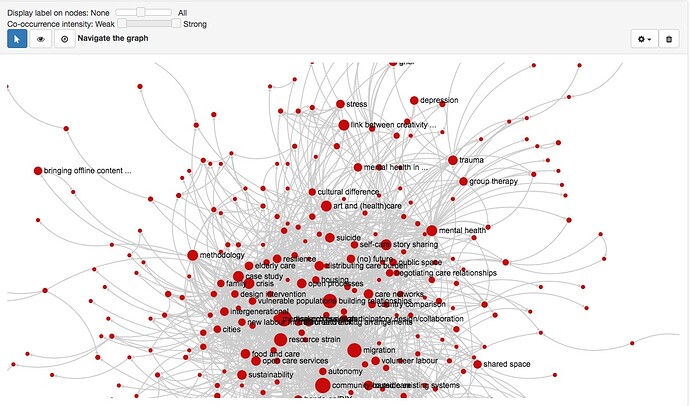

- I call up the co-occurrences graph. Too messy! Unreadable.

- I filter down (k = 6). Now I have a clear picture: the main conversation is divided into 2 groups of codes. A smaller one is clustered around "mental health", and it is connected to a larger one, clustered around

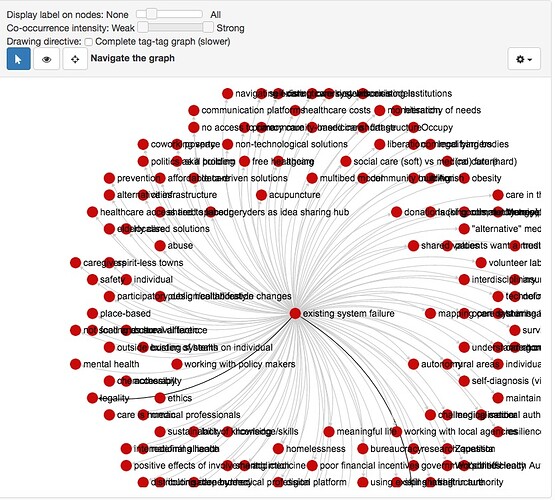

legality,community based care,resource strainandmigration. I am ignoringcase studyandresearch questionbecause they are non-semantic markers. The story here is this: community-based care is a set of solutions that the community sees most connected to two large care issues,mental healthandmigration.Resource strainandlegalitymight be interpreted as conditioning factors relevant to deploying those solutions. - I (the minister) am looking for technological solutions. So, I notice

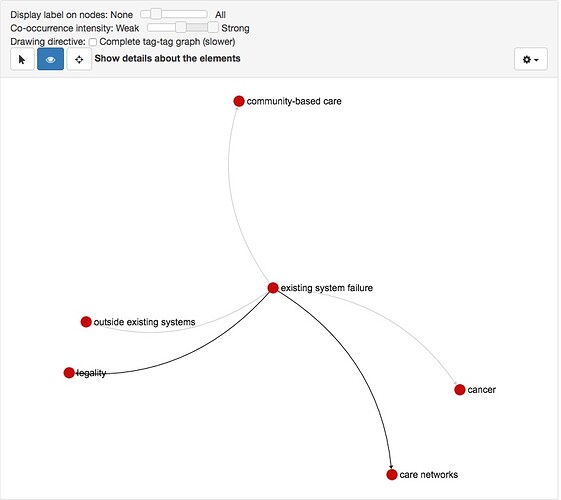

existing systems failurein the graph and I decide to look around it: hopefully somebody will have suggested solutions for systems failure. I open the ego network view of the code co-occurrences graph and center it onexisting systems failure. Again: too many connections. - I filter all the way up: the single strongest edge remaining connects "existing systems failure" to "legality" (k = 8). I start filtering back down in search of technological solutions. When I am down to k=3, "care networks" appears.

- This looks promising, so I click on the

care networkscode and get access to all the content (9 posts, 6 comments) that encode it. This allows me to learn how the community thinks about this thing calledcare networks. - But now I actually got curious about this very strong connection with legality. What is the nexus between systems failure and legality in the context of care? I can click on the edge between

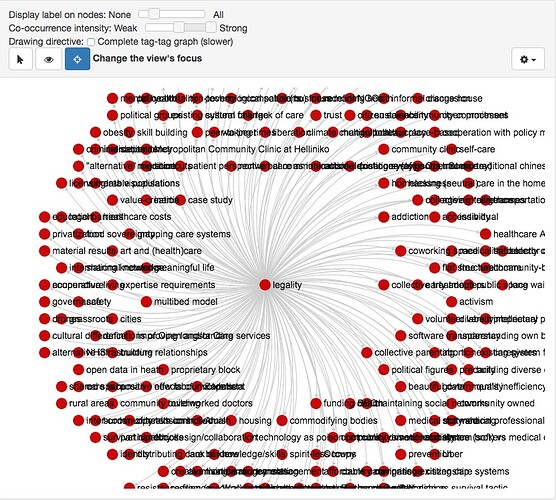

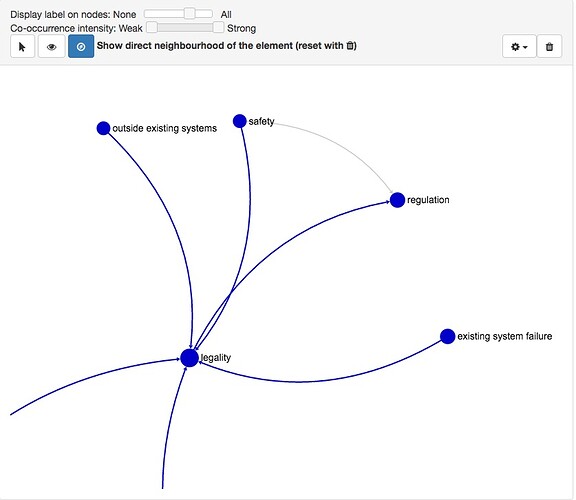

existing systems failureandlegalityto ask the community how it sees that connection play out. This gives me access to the 8 contributions associated to both codes. I do not even have to read the full content: titles like "Mortal issues for humans helping out", "No humane ghost in the machine", "Health Autonomy Center" and "Greece's shadow zero-cash health care system" tell me that sometimes the legal system is an obstacle for communities trying to solve care problems. There are problems with over-regulation, and now I understand that even though I might have a genius idea for an Open Source technology that will fix the problem of not being able to see my son's insulin levels, I will have other barriers to get through. Good to know--- I will certainly do research on how to bypass this. It turns out it is a major leitmotiv of the OpenCare research, as @Lakomaa can explain. - So now I decide to explore "legality" itself. It is a highly connected code.

I filter back up again, then gradually down. At k=8 I see “regulation”. At k=7 “safety” appears, also connected to “regulation”. It seems that perhaps legality isn’t just an obstacle, but brings up important considerations for me when I’m trying to make my technology. Let me research legal barriers, but let me also research what kinds of protections are in place for existing users of (failing) systems, so that I might not throw the baby out with the bathwater — so I can keep the good stuff, the safeguards (which, I now recall, are often the concerns people raise about Open Source) while getting around the obstructive regulation.

Now I have a top-level view of the problem; also, I have been able to zoom in onto a subset of it that is particularly actionalble for me. This knowleds was co-produced by myself (I was the one doing the driving of the dashboard) and the collective intelligence oif the community. None of this came from one person---- it came from an extended conversation and debate over a number of threads!

Works? @melancon | @Noemi | @Federico_Monaco